Over the past few weeks, there has been a significant announcement in the world of AI happening every day

The latest, from today, is the announcement of Groq (not to be confused with the name given to the chatbot for X AI):

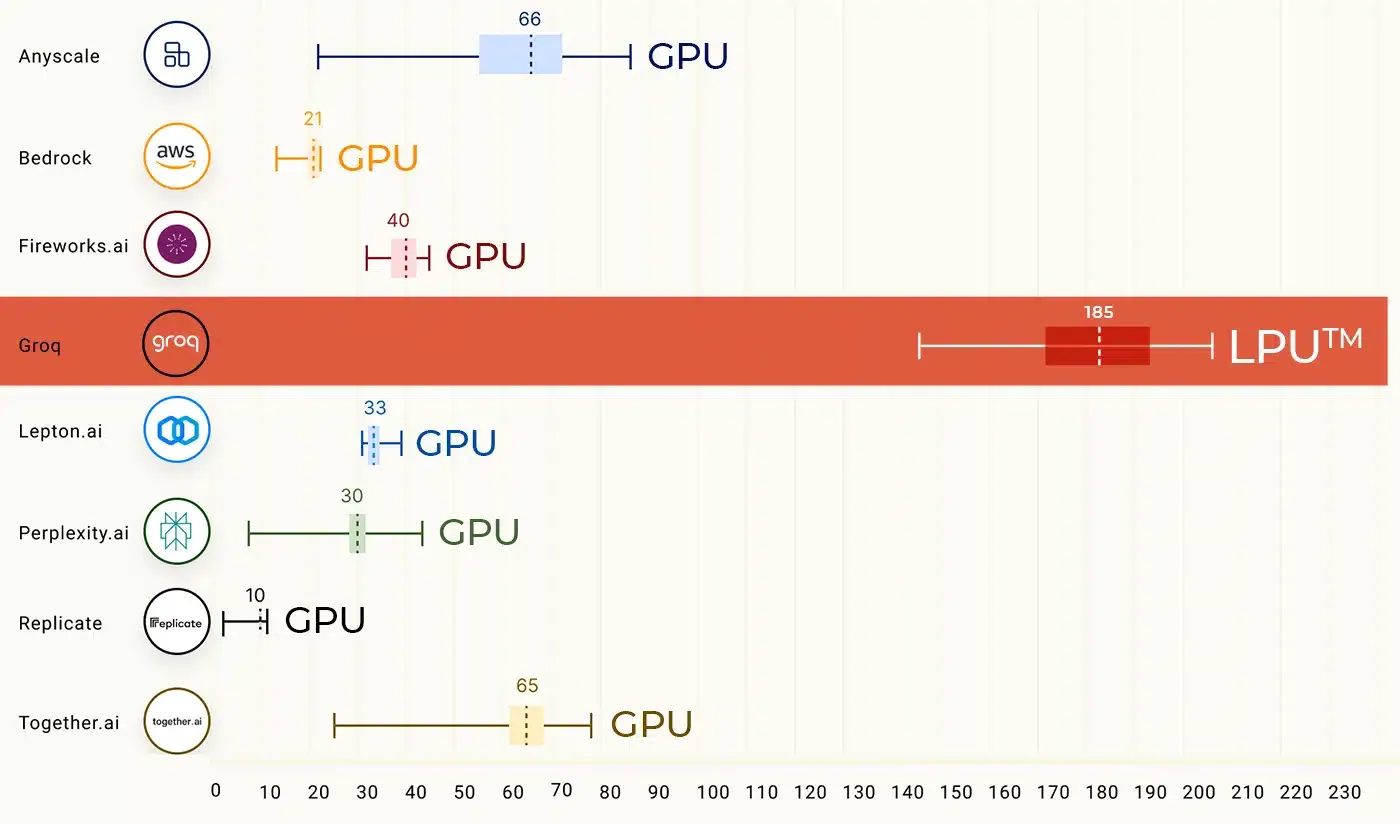

The inference speeds using Groq are significantly faster than any other provider currently available:

This not only improves user experience, but also opens up the possibility of new use cases, where you're having a conversation with in real-time with an LLM that is then being pushed to the user through text-to-speech (TTS)

Here's a demo of it in action

The fact that they've been able to improve this significant advancement in inference speed as a result of specialist hardware is another indication of why Sam Altman is said to have been particularly focused on raising investment for hardware, in recent weeks