I previously wrote about how I set up Stable Diffusion to work within a Next.js web application. Here I'm going to attempt finding out how to consistently improve the quality of the images I'm able to generate using Stable Diffusion.

Focusing on a specific style

I already found in my previous post on the topic that adding

"trending on artstation HQ, 4k, detailed" to the end of the prompt improved the results that I was seeing, so I'm going to begin with that by default.

I'm going to focus on images designed to be as photorealistic as possible. I've already seen Pieter Levels create his project This House Does Not Exist, where he renders modern architectural photos using Stable Diffusion, to great effect.

Lakes

I decided to initially focus on nature scenes, starting with lakes. I feel that lakes are more complicated because they have to accurately portray the reflection of the water.

Woodlands

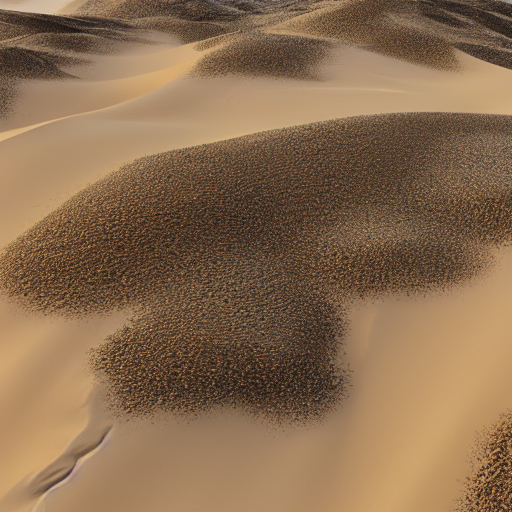

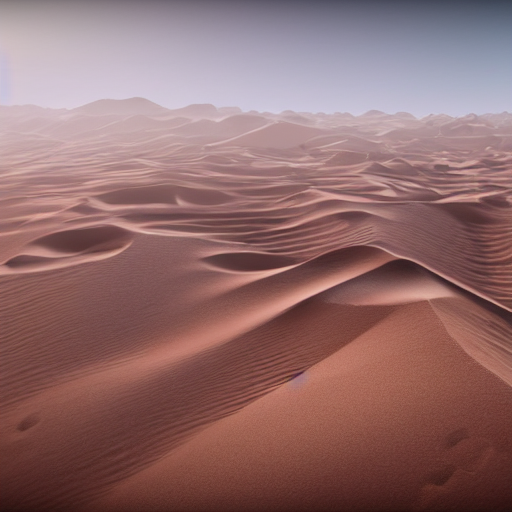

Deserts

Improving prompts with GPT-3

I decided that I wanted to experiment with whether prompts could be improved automatically with GPT-3.